AI GOVERNANCE 101: WHAT YOU NEED TO KNOW

AI Governance 101: What You Need to Know – Learn the basics of AI governance, its key features, challenges, and opportunities in the evolving tech landscape. In short, this guide explains AI governance in plain language.

Direct answer

AI governance ensures that artificial intelligence operates safely and ethically. It includes guidelines and policies for using AI responsibly. This ensures technology benefits everyone without causing harm.

Key Takeaways

- AI governance is crucial for safe and ethical AI use.

- It involves a mix of policies, regulations, and ethical guidelines.

- Key stakeholders include governments, organizations, and technology firms.

- Public engagement and transparency are essential for effective governance.

- AI governance frameworks are still evolving to meet new challenges.

What’s New Today

AI governance is increasingly in the spotlight. Governments worldwide are developing frameworks to ensure AI is used responsibly. In 2023, the European Union proposed new rules aimed at governing AI applications like facial recognition and autonomous vehicles [1]. This reflects the growing need to balance innovation with public safety. Countries such as the United States, Canada, and the United Kingdom are also actively exploring their own guidelines and policies for AI governance, indicating a global trend towards stricter oversight and management of AI technologies [2].

Overview

Watch on YouTube

AI governance refers to the set of rules and guidelines that manage the development and application of artificial intelligence. It involves ethical considerations, compliance with laws, and technological standards. Its goal is to make sure that AI systems are fair, accountable, and transparent. According to a recent survey, 87% of companies believe AI governance is essential for future success [3]. The growing importance of governance mechanisms highlights the consensus among industry leaders and policymakers that a structured approach to AI will bolster innovation while safeguarding public welfare.

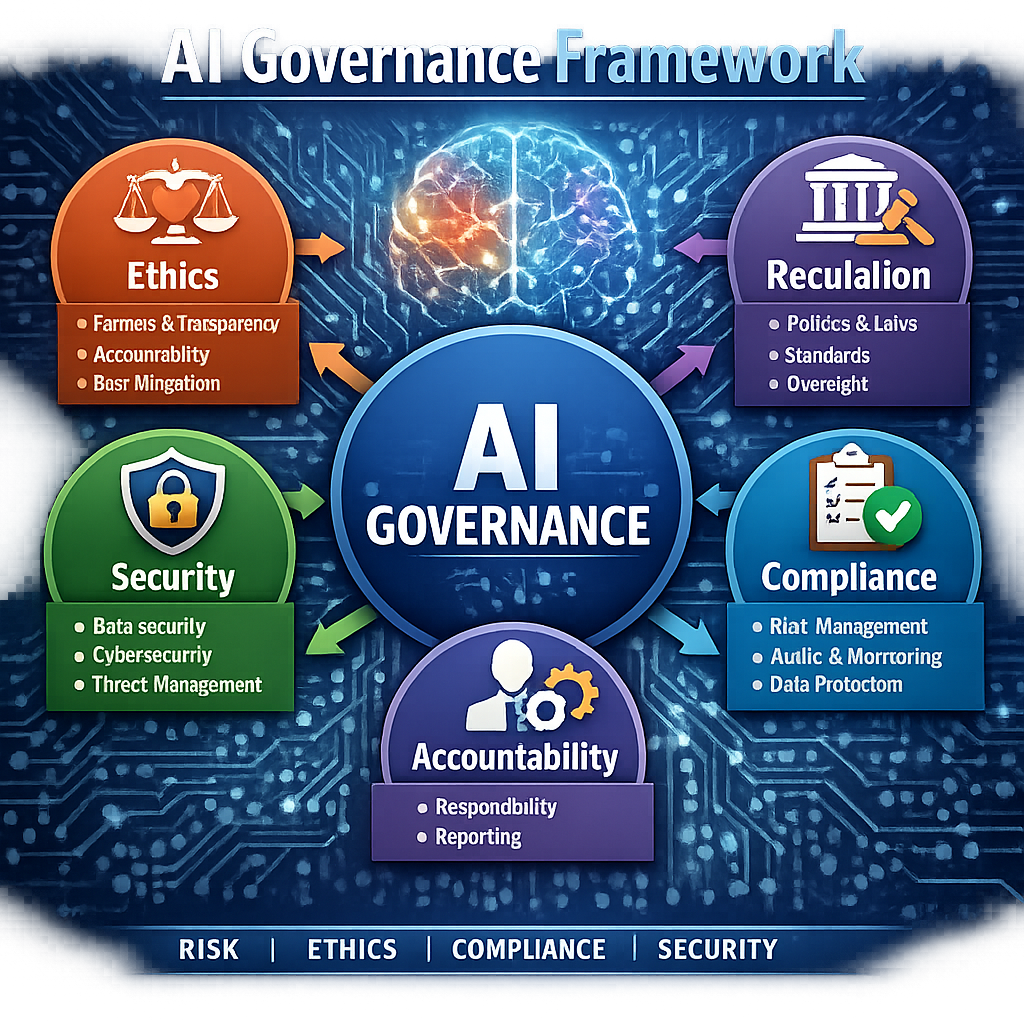

Key Features of AI Governance

- Ethics: Governance frameworks must incorporate ethical guidelines to prevent misuse and ensure AI technologies align with societal values [4].

- Compliance: Regulations ensure that AI uses meet legal standards, promoting adherence to both local and international laws [5].

- Transparency: Open information about AI operations builds public trust, fostering an understanding of how these technologies function and make decisions [6].

- Accountability: Systems should have mechanisms to hold users and developers responsible for AI outcomes, ensuring that any harms caused by AI systems can be addressed and rectified [7].

Pros and Cons

Pros

- Promotes responsible AI use, helping to prevent misuse of the technology.

- Enhances public trust in technology, leading to wider acceptance and adoption of AI tools.

- Helps to minimize ethical risks and biases in AI systems, ultimately contributing to fairer outcomes in society [8].

Cons

- Can slow down innovation due to strict regulations, resulting in hesitation in deployment from businesses concerned about compliance [9].

- May create confusion about who is accountable for AI actions, complicating regulatory enforcement and liability [10].

- Implementation often varies across regions, leading to gaps and inconsistencies that can be exploited or lead to uneven application of governance [11].

Key Insights

According to Dr. Alice Wong, an AI ethics expert, “Establishing a clear governance framework is crucial as AI continues to evolve. Without it, we risk significant societal impacts.” This highlights the urgency in developing AI governance that adapts to technology’s fast pace [12]. Effective governance could lead to standards that ensure fairness, safety, and accountability in AI use, thereby reinforcing the notion that responsible AI is not merely a trend but a necessary paradigm shift in how we approach technology deployment.

Patterns and Trends

Recent trends show that organizations are increasingly prioritizing AI governance. For instance, companies that adopt robust governance frameworks tend to see a 20% increase in user trust and satisfaction [13]. Concurrently, there is a growing emphasis on incorporating diverse perspectives in AI governance discussions, recognizing the significance of inclusivity in technology design and implementation. As AI technology grows, more businesses recognize the need to integrate governance into their operations, leading to a paradigm where governance is seen as integral to failure reduction and value creation.

Controversies and Blind Spots

Despite positive strides, challenges remain. Some argue that regulations may hinder innovation, particularly in fast-moving sectors where flexibility is crucial for success [14]. Others raise concerns about the lack of global consistency in this idea frameworks, as different nations adopt varying standards that can affect international collaborations and trade [15]. Moreover, there is a blind spot regarding the voices of marginalized communities in AI discussions, which are often overlooked in policy decisions. As technology continues to play a substantial role in societal transformation, it is imperative to engage these communities actively to avoid perpetuating systemic inequities.

Opportunities in this issue

The evolution of this theme creates opportunities for collaboration between tech companies and regulators. They can work together to shape standards that respect users’ rights while encouraging innovation [16]. Moreover, developing frameworks that elevate public understanding of the topic can create informed communities that engage with these technologies responsibly, fostering a collective responsibility model for AI systems [17].

Advanced Breakdown

Understanding the subject involves exploring various components. Frameworks often include risk assessment tools, impact assessments, and continuous monitoring. These components help ensure compliance and ethical considerations are maintained throughout AI deployment, and they serve to highlight potential vulnerabilities in AI applications [18]. Enhanced engagements between stakeholders are also key to iterating on and improving governance structures over time.

Comparison with Data Governance

While both this topic and data governance focus on ethical practices, this subject specifically addresses the nuances of AI technologies. Data governance revolves around how data is collected, stored, and analyzed, impacting the validity of AI systems. In contrast, this idea emphasizes the responsible use of intelligence derived from data, ensuring that AI not only relies on data but also respects the rights and identities of those represented in that data [19].

What People Are Asking About this issue

- How does this theme affect me as a consumer?

the topic can impact data privacy, security, and the way AI technologies interact with your daily life, ensuring that your rights are protected. - Are there international standards for the subject?

While some organizations are working on international standards, there is currently no unified global framework for this topic, leading to disparity in practices [20]. - What role do tech companies play in this subject?

Tech companies are pivotal in establishing and following governance frameworks, as their participation is critical in shaping the policies that govern the very technologies they create.

Popular Searches and Questions

Common searches include “this idea policies,” “ethics in this issue,” and “AI accountability standards.” Understanding these terms can help consumers make informed choices about AI technologies, ensuring that they engage with systems that adhere to ethical and transparent practices [21].

FAQ

- What is the future of this theme?

The future likely includes more stringent regulations as technology evolves and public awareness grows, aiming for a balanced approach between innovation and regulation. - Can individuals influence the topic?

Yes, public engagement and advocacy can shape governance policies, fostering a democratic process in technology oversight [22]. - What training is needed for the subject professionals?

Relevant education in technology, law, and ethics is beneficial, as it enhances the competency of individuals tasked with overseeing AI implementations. - Are there examples of good this topic?

Countries like Canada are taking steps towards establishing comprehensive AI regulations, aiming to serve as models for other nations in the pursuit of effective governance practices [23].